The Finish Line Problem

AI didn't make writing easier. It made finishing impossible.

AI • Writing • Decision Making • Product Thinking • Productivity

I sat down to write about how AI is making our thinking shallow—how it’s producing work that looks better on the surface but hollows out the substance underneath, how we’re trading depth for polish without realizing the trade we’re making.

Then I opened AI and asked it to help me write it. By version 6, I’d completely forgotten what I was trying to say.

I kept rewriting the opening. Not because it was broken, but because I kept thinking it could be sharper, cleaner, more likely to make someone actually stop scrolling instead of skimming past like everything else they see. So I fed it to AI. Three seconds later I had something that sounded tighter, so I asked for another version, then one that sounded more like me, then one that sounded less like me, then one that sounded like the writer I wish I was instead of the one staring at the screen not knowing what the hell I’m actually trying to say anymore.

Somewhere around version 17 I looked at the screen and realized every single version was objectively better than the one before it—tighter structure, better hook, smoother flow—and I hated all of them.

So I scrolled back to the first thing I wrote. It was a mess. Too long, kind of awkward in places, probably needed another pass. But it had something the other 16 didn’t. It was actually mine. It came from me wrestling with an idea instead of me sitting there like a judge at a talent show deciding whether AI’s version sounded good enough to advance to the next round.

That’s when it hit me. AI didn’t just change how I write. It changed the moment before I write—the part where you’re supposed to stop thinking and just commit to saying something. And I’m pretty sure we’re all losing the ability to do that without even noticing it’s gone.

The Willpower Battery

There’s research that explains exactly what I felt, and it’s from a psychologist named Baumeister who spent the late 90s studying how willpower actually works. His theory was dead simple: willpower isn’t some infinite well you can keep drawing from. It’s a battery. Use it too much and you’re running on empty.

The experiment he’s famous for is almost cruel in how elegant it is. He brought people into a room and put two bowls in front of them—one filled with chocolate chip cookies, one filled with radishes. Half the people could eat whatever they wanted. The other half got told they could only eat radishes. So they sat there staring at cookies they weren’t allowed to touch, fighting the urge, burning willpower on something that shouldn’t even be hard.

Then Baumeister gave everyone an impossible puzzle and timed how long they’d keep trying before they quit.

The people who ate whatever they wanted? Kept going for 19 minutes. The people who had to resist the cookies? Eight minutes. They’d already drained the tank fighting something as stupid as not eating a cookie, so by the time they hit the puzzle they had nothing left to give.

The point wasn’t about cookies. The point was that resisting temptation depletes you the exact same way making decisions does. Every time you evaluate an option, weigh whether to keep looking, decide if this is good enough or if there’s something better—you’re burning fuel. Eventually you hit empty and your brain just forces you to pick something, not because it’s perfect but because you’re done.

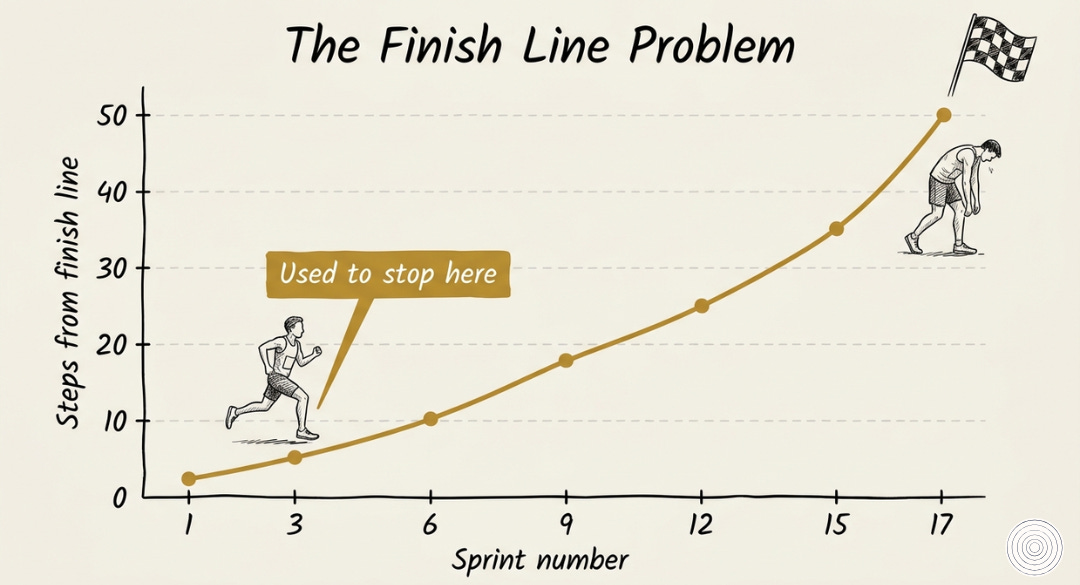

That depletion used to be the thing that made you finish. You’d hit version 3 or 4, feel that exhaustion creeping in like fog, and just commit. The constraint forced closure. You didn’t have a choice.

AI killed that constraint.

The Judgment Trap

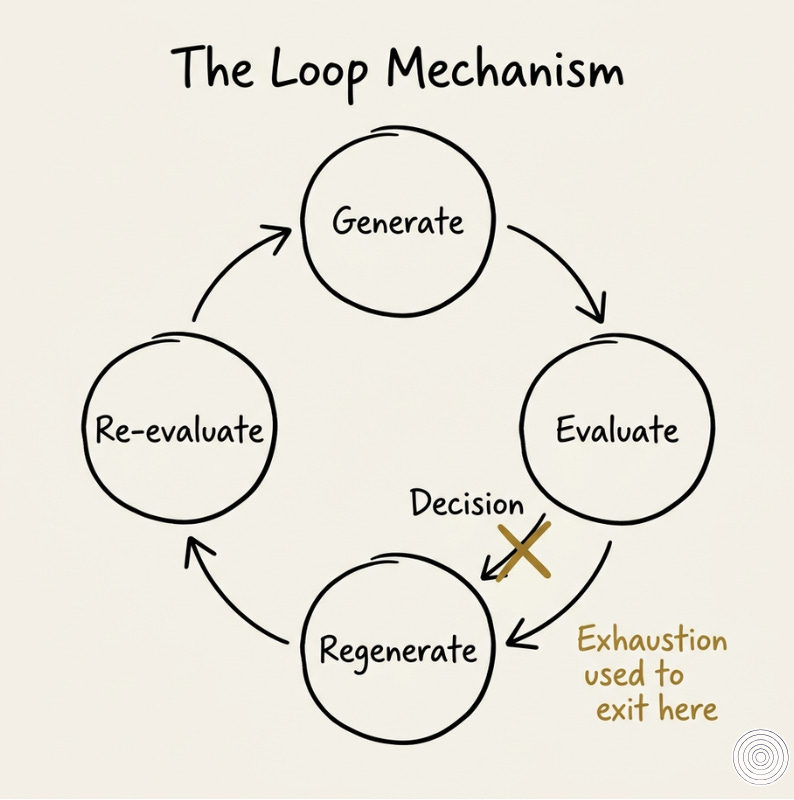

Now you can crank out version 12 without spending a single drop of energy. The AI does the grinding. You just sit there and judge. You’re still spending something—cognitive load, the mental effort of processing and comparing—but it’s not the kind of exhaustion that forces you to stop. It’s the kind that just makes you more confused.

But here’s what Baumeister’s work quietly showed—judging something is nothing like building it.

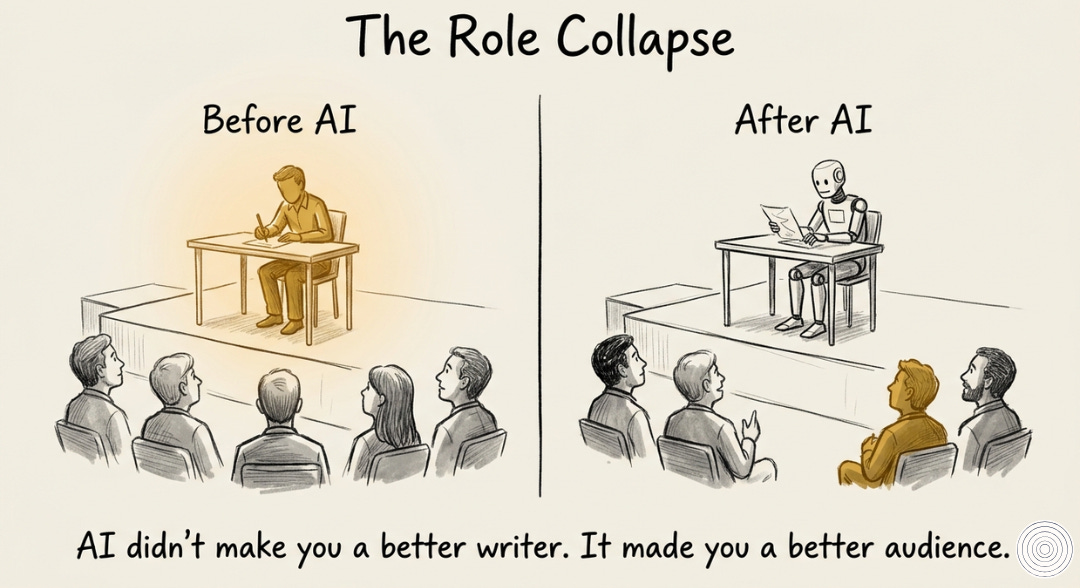

When you build an idea, you’re inside it. You know why every piece is there, what it’s supposed to do, where it’s trying to go. You’re in the mud wrestling with the thing itself. When you evaluate, you’re standing on the sidelines. You’re checking if it flows, if it sounds good, if it lands the way you want. You’re reading it like someone in the audience watching a performance, not like someone on stage trying to figure out what the hell comes next.

There’s supposed to be a wall between those two modes. The writer decides what to say. The audience decides if it worked. AI took a sledgehammer to the wall. It shoves you into the audience seat before you’ve finished being the writer, and once you’re sitting there eating popcorn it’s harder than you think to get back on stage.

You stop asking “What am I actually trying to say here?” and start asking “Does this sound good?” Those aren’t the same question. They’re not even in the same building.

The Replacement Problem

But the real problem isn’t just that you’re evaluating instead of building. It’s what you’re evaluating.

Before AI, you iterated on your own idea. Version 1, version 2, version 3—they were all just different attempts at sharpening the same thought. The core stayed the same. You were just trying to say it better.

Now? Every time you hit regenerate, AI hands you something completely different.

It doesn’t refine your thinking. It replaces it. You start with something rough—some half-formed thought trying to claw its way out of your brain—and ask AI to make it better. What comes back sounds polished and clean and nothing like what you actually meant. So you ask again. And again.

By version 6, the thing you started with is gone. You’re not refining anymore. You’re trying to choose between five completely different ideas, none of which came from you, none of which feel like yours, all of which sound better than anything you could’ve written but somehow worse than what you were trying to say before you forgot what that was.

And this is where the exhaustion really kicks in. You’re not choosing between versions of your idea. You’re choosing between strangers.

The evaluation becomes impossible because you’re staring at a lineup of things you didn’t build trying to figure out which one feels closest to the thing you’ve already lost.

The “I can’t do this anymore” moment still happens. But it doesn’t lead to a decision anymore. It just leads to asking AI to try one more time. Because regenerating costs nothing and committing to something that doesn’t feel like yours costs everything.

The Infinite Loop

So you’re stuck. You generate something, realize it’s not quite right, regenerate. The new one isn’t right either. Regenerate again.

It’s like running toward a finish line, and just as you’re about to cross it, someone moves it twenty feet further and says “actually, that’s the real finish line.” So you keep running. Then they move it again. And again. The line never disappears. It just keeps moving.

The exhaustion that used to force you to cross the line? Still there. But now it doesn’t make you finish. It just makes you ask AI to run the race for you one more time.

What This Actually Looks Like

I think this is what’s happening to most of the writing you’re seeing right now. LinkedIn posts that are technically flawless and say absolutely nothing. Strategy docs that explore every possible angle and recommend none of them. Emails polished so smooth they’ve lost the edge they were supposed to have.

It’s not a creativity problem. It’s not even a writing problem. It’s a commitment problem.

People aren’t finishing their own thoughts anymore. They’re abandoning them halfway through and swapping them out for something that sounds better but means less.

Most of them don’t even realize they’re doing it because the original idea—the thing they actually sat down to say—disappeared somewhere between version 3 and version 8, and by the time they’re exhausted enough to stop regenerating they can’t remember what they were trying to say in the first place.

The Uncertainty

I don’t know where this ends up. Maybe it doesn’t matter. Maybe writing just becomes this—collaborative, AI-assisted, endlessly iterative—and nobody cares who had the original thought as long as the final output sounds professional enough to screenshot and share.

Or maybe we’re watching an entire generation forget how to finish their own ideas. Not because they can’t, but because they never have to.

If you’re reading this and you’re under 25, this might not feel like a problem yet. You grew up with this. Infinite options, instant alternatives, regenerate until it’s perfect. But here’s what I’m watching happen to people who didn’t grow up with it: they’re forgetting how to commit to something. And I genuinely don’t know if you’ll figure out some way around this that we can’t see yet, or if you’ll just hit the same wall we’re hitting now, just five years later when it’s harder to climb back.

I don’t have an answer. But I know this much: the skill that’s starting to separate people isn’t writing better prompts or knowing which AI to use. It’s recognizing when version 3 is good enough and walking away before version 4 exists.

That’s a completely different muscle. And most of us have stopped using it.

The Choice

Version 17 of this piece was cleaner and better structured. Probably easier to read and would’ve performed better. But it wasn’t mine anymore.

This one is.

I still don’t know if I made the right call. But at least I know I made one.

What's the difference between finishing your own thought and abandoning it for something that sounds better? Drop a note below—I'm curious what version you'd have published.